Artificial intelligence and Machine learning technologies have become essential technologies in almost every organization and business. It has made our lives easier and coziest than ever before. These technologies are specially designed to reduce human effort as much as possible. It is becoming a crucial part of the strategic operation as human capabilities can be undertaken in software inexpensively and at scale. AI Technologies are versatile and can be applied in every sector to enable new possibilities, opportunities, and efficiencies.

AI technologies serve us in many ways, such as our voice assistants (Alexa and Siri), driverless cars, chatbots who can help us at any time and any place, World wide Map services (Google maps), super advanced robots that can help humans in almost every field. These numerous and tangible use cases are enabling corporate revenue growth and cost savings in current sectors. In fact, it helps organizations, business leaders, and other individuals in making data-driven business decisions. It can be applied to multiple processes within a business function. That is why AI and machine learning technologies are gaining popularity rapidly. It results in an increased demand for skilled and certified AI professionals. Therefore, people interested in making a career in this domain are seeking suitable AI programs and machine learning certification courses to help them land a safe career.

In this article, we will discuss the history of artificial intelligence and how it has evolved over time.

What is Artificial Intelligence?

Artificial Intelligence is simply known as a set of tools and technologies that can simulate human intelligence processes by computer systems, robots, and machines. It is a vast field that involves several streams like computer science, engineering, and robust datasets to mimic the problem-solving and decision-making capabilities of the human mind.

On the other side, Artificial Intelligence is the science and the engineering of making intelligent machines and computer programs that can run these machines smoothly. It is an interdisciplinary science with multiple approaches, but advancements in deep learning and machine learning are creating a paradigm shift in virtually almost every field of the AI industry. Artificial intelligence refers to a modern approach that involves two main features mentioned below.

Human Approach – It involves systems that can think like humans and systems that act like humans.

Ideal Approach – It involves systems that can think rationally and Systems that act rationally.

AI technologies can be frequently applied to the project of developing systems endowed with the intellectual processes characteristic of humans like reasoning, finding meaning, learning from past experience, generalizing, etc.

History of Artificial Intelligence

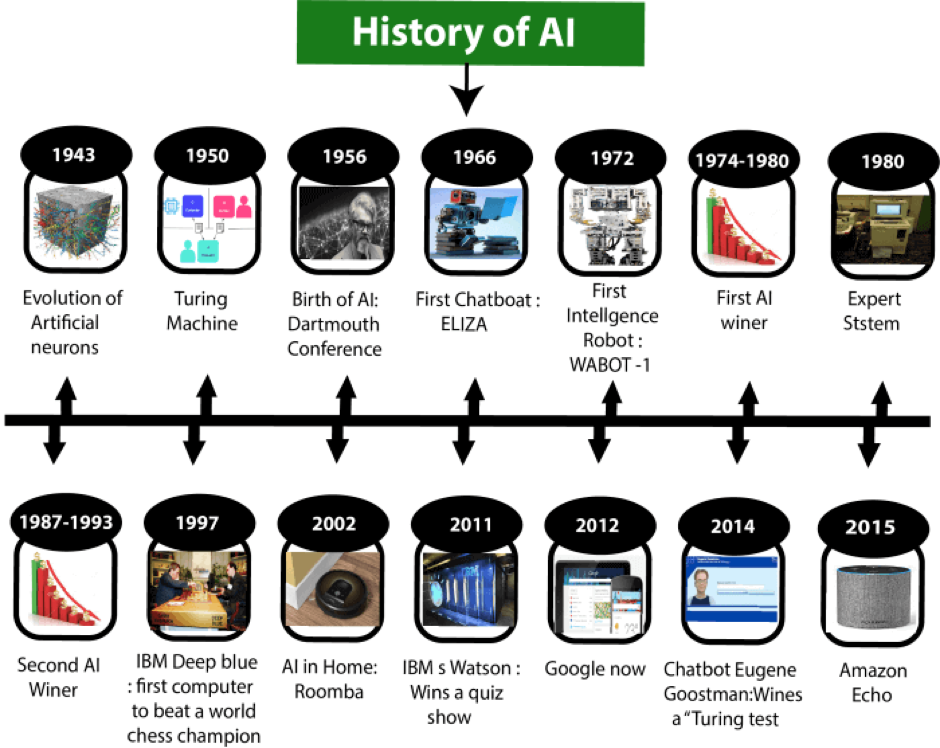

As the topic involves the word “ History”, we can find the concept of Artificial intelligence in history itself as there are many myths of Mechanical men in Ancient Greek and Egyptian Myths. The following picture is a little try to understand the history of artificial intelligence at a glance.

The Year 1943- Is considered the introductory year for AI technologies as the very first work in the field was completed by Warren McCulloch and Walter pits proposed the model of artificial neurons.

The Year 1949- was the year when Donald Hebb presented an updated rule for modifying the connection strength between neurons known as Hebbian Learning nowadays.

The Year 1950- This year is considered the turning point of artificial intelligence when Alan Turing published the article “ Computing Machinery and Intelligence.” He proposed to answer the question ‘can machines think?’ and presented the Turing Test to determine if a computer can demonstrate the same intelligence as a human and the machine’s ability to exhibit intelligent behaviour equivalent to human intelligence.

The Year 1955- In this year’s first artificial intelligence program, “Logic Theorist,” was created by two scientists, Allen Newell and Herbert A. Simon. It has proved many mathematical theorems and found new and more accurate proofs for some theorems.

The Year 1956- It was the year when the word “artificial intelligence“ was adopted by American computer scientist John at a conference, and further, it was coined as an academic field. It was the high time that computer languages like FORTRAN, COBOL, LISP, etc., were invented.

The Year 1966- It was the time when the golden era of artificial intelligence was begun. Researchers started developing algorithms that could solve mathematical problems. In fact, the first chatbot named ELIZA was developed in 1966 only.

The Year 1967- The first computer-based neural network Mark1 Perceptron created by the scientist Frank Rosenblatt.

The Year 1972- In this year, Japan presented a new wonder of Artificial intelligence. It was the first intelligent humanoid robot which was named WABOT-1.

The Year 1980- There was a Boom in the field of artificial intelligence in the year 1980 when AI returned with an “Expert System” which was programmed to emulate the decision-making ability of a human expert.

The Year 1997- It was the year when for the first time, IBM Deep Blue (computer) beat world chess champion Gray Kasparov in a world chess championship.

The Year 2002- In this year, again a new invention in the field in the form of a vacuum cleaner named Roomba when AI entered the home.

The Year 2006- After entering homes, in the year 2006, AI came into the business world and provided several benefits to many tech giants such as Facebook, Netflix, Twitter, etc.

The Year 2011- Again this year, IBM’s Watson won a quiz show (jeopardy) where it had to solve difficult questions and riddles. It made it clear that computers can understand neural languages and can solve tricky questions quickly.

The Year 2012- was the year for Google when it launched an android application feature, “Google now” that was able to provide valuable information as a prediction to the users.

The Year 2014- Chatbot “Eugene Goostman” won a competition in the infamous “Turing test.”

The Year 2015- In this year, Baidu’s Minwa supercomputer used a deep neural network called a convolutional neural network in order to identify and categorize images with higher accuracy than the average human.

The Year 2018- This year was again for IBM and Google. They have invented many new things, such as “project Debater” (IBM) and an AI program “Duplex” by Google as a virtual assistant.

And the list of new and advanced innovations will go on and on. So it is a golden history of artificial intelligence where scientists continually provide their best to improve human life.